IAM Roles for Service Accounts – IRSA and EKS Pod Identity

Workload Identity

How Apps Get access to AWS services/resources.

When applications executing within EKS pods need to interact with external AWS services such as – upload files to an S3 bucket, read secrets from AWS Secrets Manager, or write records to DynamoDB they require valid, securely scoped AWS credentials.

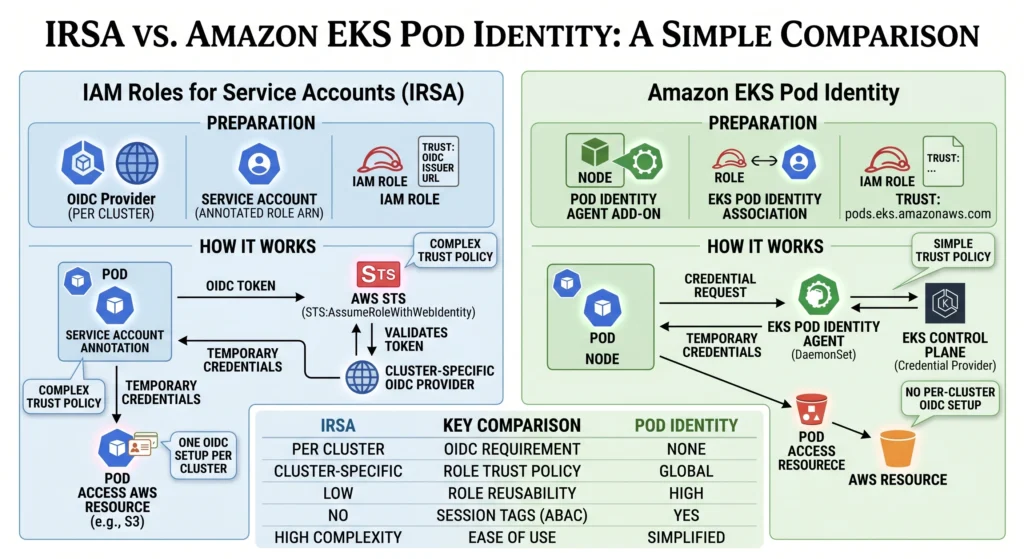

Currently, two primary architectures dictate how pods securely obtain AWS credentials: the legacy IAM Roles for Service Accounts (IRSA) and the modern Amazon EKS Pod Identity.

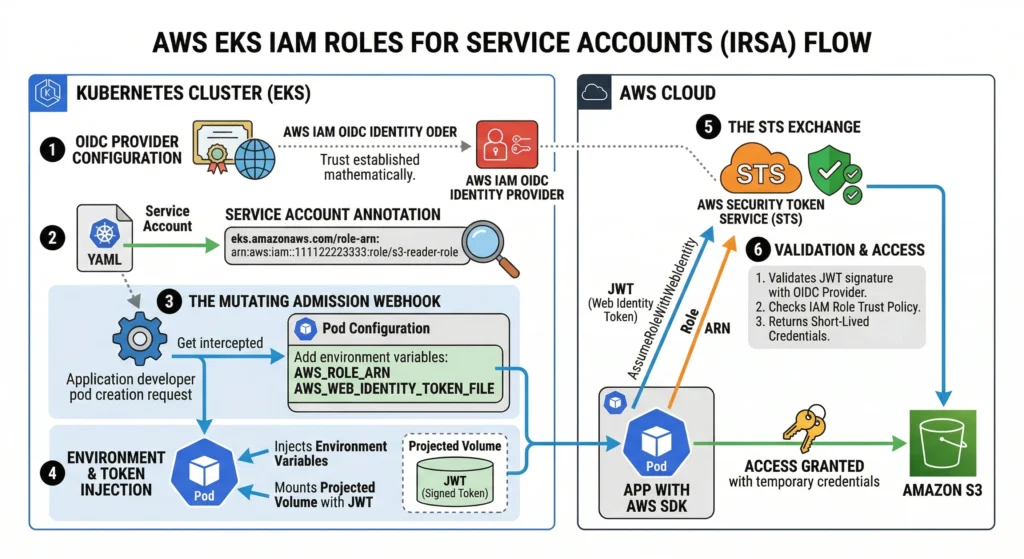

1. The Legacy Architecture: IAM Roles for Service Accounts (IRSA)

Introduced in 2019, IRSA was a massive security breakthrough. Before IRSA, the only way to give a Pod AWS permissions was to give those permissions to the underlying EC2 Worker Node meaning every Pod on that node shared the same overly broad permissions. IRSA solved this by utilizing OpenID Connect (OIDC) federation to grant fine-grained IAM roles directly at the Pod level.

How IRSA Works: The Cryptographic Handshake

- OIDC Provider Configuration: The EKS cluster generates a public OIDC discovery endpoint. You must create an IAM OIDC Identity Provider in your AWS account that mathematically trusts this specific cluster endpoint.

- ServiceAccount Annotation: You create a Kubernetes

ServiceAccountand apply a specific annotation linking it to your AWS IAM Role:eks.amazonaws.com/role-arn: arn:aws:iam::111122223333:role/s3-reader-role - The Mutating Admission Webhook: When a developer deploys a Pod using this

ServiceAccount, an invisible EKS-managed webhook intercepts the Pod creation request before it reaches the node. - Environment & Token Injection: The webhook automatically modifies the Pod configuration to inject two critical environment variables:

AWS_ROLE_ARNandAWS_WEB_IDENTITY_TOKEN_FILE. It also mounts a Kubernetes “Projected Volume” containing a cryptographically signed JSON Web Token (JWT) minted by the Kubernetes API server. - The STS Exchange: When the AWS SDK (e.g., Python’s

boto3) initializes inside your application, it detects those environment variables. It reads the JWT from the mounted file and fires an API call to AWS STS:AssumeRoleWithWebIdentity. - Validation & Access: AWS STS validates the JWT against the cluster’s OIDC provider. If the signature matches and the IAM Role’s trust policy allows it, STS returns short-lived, temporary AWS credentials to the Pod.

IAM Roles for Service Accounts IRSA Lab

IAM Roles for Service Accounts IRSA across multi-cloud environments Lab

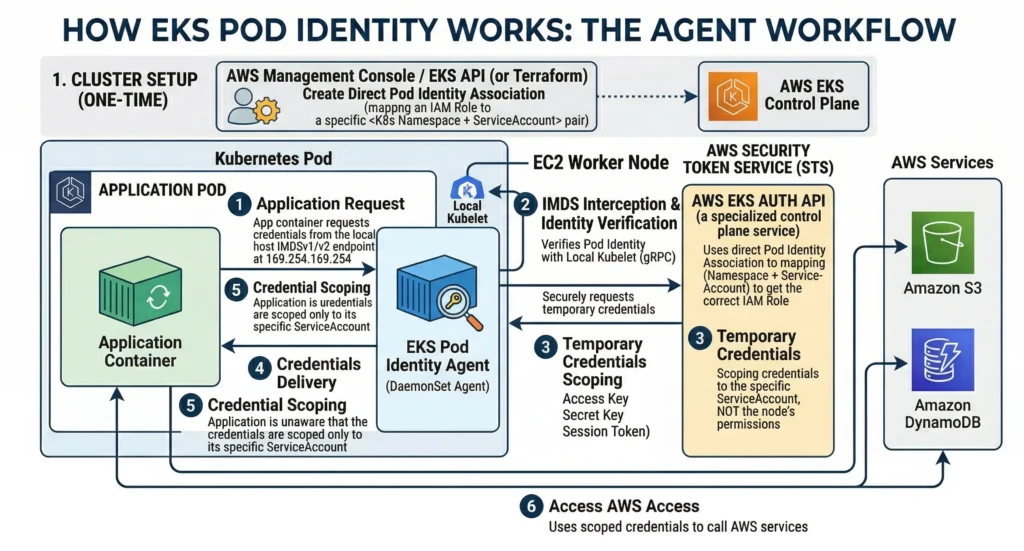

2. Amazon EKS Pod Identity – The Modern Architecture

To eliminate the operational friction IAM trust policies, and certificate thumbprint management of OIDC federation, AWS released EKS Pod Identity. This is the recommended standard for modern EC2-based workload identity.

Instead of relying on the Kubernetes API server to inject tokens, it utilizes a simplified, highly secure agent-based architecture that shifts the complexity entirely to the AWS Control Plane.

How EKS Pod Identity Works: The Agent Workflow

- The DaemonSet Agent: Deploy the Amazon EKS Pod Identity Agent (an official EKS Add-on) as a

DaemonSetacross your EC2 worker nodes. - API Associations (No YAML Annotations): Instead of annotating Kubernetes

ServiceAccountYAML files, you use the AWS EKS API (or Terraform) to create a direct “Pod Identity Association.” This natively maps an IAM Role directly to a Kubernetes Namespace + ServiceAccount pair. - IMDS Interception: When the AWS SDK (inside your application Pod) attempts to retrieve credentials, it defaults to querying the EC2 Instance Metadata Service (IMDS) at

169.254.169.254. - Local Provisioning: The EKS Pod Identity Agent running on the host node intercepts this IMDS network call. The agent verifies the exact identity of the Pod with the local

kubelet, then securely requests temporary credentials from the EKS Auth API (a specialized AWS control plane service). - Seamless Delivery: The agent delivers these temporary STS credentials back to the Pod. The application remains completely unaware that the credentials were mathematically scoped to its specific

ServiceAccountrather than inheriting the underlying EC2 node’s permissions.

Amazon EKS Pod Identity Lab

While IRSA is highly secure, it introduces significant operational overhead that drives modern teams toward the newer EKS Pod Identity agent.

1. The Complex Trust Policy (The IaC Nightmare) For IRSA to be secure, the IAM Role must verify exactly which namespace and which ServiceAccount is asking for access. This requires a notoriously brittle StringEquals condition in your Terraform/CloudFormation. If a developer renames their ServiceAccount, their AWS access instantly breaks:

"Condition": {

"StringEquals": {

"oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53:sub": "system:serviceaccount:production:my-app-sa",

"oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53:aud": "sts.amazonaws.com"

}

}

2. OIDC Thumbprint Management AWS STS relies on SSL certificate thumbprints to trust the EKS OIDC endpoint. Historically, if the root Certificate Authority (CA) rotated, the thumbprint changed, and IRSA broke cluster-wide. (Note: AWS has recently mitigated this by securing the root CA, but custom/on-prem OIDC setups still suffer from this).

3. The Legacy SDK Version Trap Because IRSA relies on the AWS_WEB_IDENTITY_TOKEN_FILE environment variable, your application must use a modern AWS SDK. If a developer deploys a legacy application using an outdated version of boto3 (Python), the AWS Java SDK v1, or an old AWS CLI version, the app will fail to authenticate because it simply doesn’t know how to look for the injected JWT file.

If EKS Pod Identity is the new standard, why do we still need IRSA?

Even though AWS recommends EKS Pod Identity for modern EC2 workloads, IRSA is not dead. You are strictly required to use IRSA in these three scenarios:

- AWS Fargate: Fargate is serverless. You cannot run DaemonSets (background agents) on Fargate nodes. Because EKS Pod Identity relies on a DaemonSet agent, it is completely unsupported on Fargate. IRSA is the only way to give Fargate Pods AWS permissions.

- Cross-Account Hub & Spoke: IRSA makes it incredibly easy for a Pod in “Cluster A” (Account 1) to assume an IAM Role in “Account 2”. EKS Pod Identity is currently much more difficult to configure across AWS account boundaries.

- EKS Anywhere / Hybrid Cloud: If you are running EKS on-premises (VMware or Bare Metal) and need your local Pods to securely talk to AWS S3, IRSA’s OIDC federation allows those off-cloud Pods to authenticate without needing permanent IAM Access Keys stored in your data center.

- The Trust Policy Simplification: With IRSA, your IAM Role Trust Policy required a complex, brittle

StringEqualscondition matching an exact OIDC URL and ServiceAccount name. With EKS Pod Identity, you completely drop the OIDC provider. Your IAM Role simply needs to trust the universal EKS principal:- Note: You must also ensure the

sts:TagSessionaction is allowed in the trust policy, or the association will fail silently.

- Note: You must also ensure the

Architecture Comparison Summary

| Feature / Mechanism | IAM Roles for Service Accounts (IRSA) | EKS Pod Identity (Modern Standard) |

| Core Technology | OIDC Federation & Mutating Webhook | DaemonSet Agent & EKS Auth API |

| Credential Retrieval | STS AssumeRoleWithWebIdentity | IMDS Interception by local Node Agent |

| IAM Trust Policy Principal | The specific EKS Cluster’s OIDC Provider | pods.eks.amazonaws.com |

| Cluster Dependency | Requires public OIDC endpoint creation | No external OIDC dependencies |

| Best Used For | Legacy clusters, or multi-cloud workloads | All modern Amazon EKS deployments |

| OIDC requires | Yes. Why IRSA requires OIDC per cluster: Unique Identity: Every EKS cluster has its own unique OpenID Connect (OIDC) issuer URL. Explicit Trust: For IAM to trust a request from a Pod, you must create a “Provider” in IAM that matches that specific cluster’s URL. Trust Policy Maintenance: If you want one IAM Role to be used across 10 clusters via IRSA, your Role’s Trust Policy must explicitly list all 10 OIDC provider ARNs. This often leads to hitting the IAM Trust Policy size limit (2,048 characters). | No. How Pod Identity is different: No OIDC Providers: You don’t create OIDC providers in IAM. Global Trust: The IAM Role simply trusts the EKS service ( pods.eks.amazonaws.com).Decoupled: The relationship is managed via an “Association” inside the EKS cluster, meaning the IAM Role doesn’t need to “know” about the cluster’s specific identity provider. |

The DevSecOps Advantage & Architect Considerations

By migrating from IRSA to EKS Pod Identity, platform teams can eliminate brittle IAM configurations, reduce the surface area for configuration drift, and establish a cleaner, more resilient DevSecOps pipeline for machine identities.

- No OIDC Management: You completely bypass OIDC discovery endpoints and thumbprint management.

- Simplified IAM Trust Policies: The IAM Role simply needs to trust the principal

pods.eks.amazonaws.com. - Streamlined IaC: Managing these mappings in Terraform is highly efficient, utilizing the

aws_eks_pod_identity_associationresource without needing to parse complex JSON trust relationships. - Webhook Latency Mitigation: STS token validation introduces a slight latency to API calls. Caching mechanisms within the authenticator mitigate this for subsequent requests from the same user.

- Impersonation Risks: If a user can modify the

aws-authConfigMap or EKS Access Entries, they can escalate their privileges by mapping their own IAM user to thesystem:mastersgroup. Access to these must be strictly audited.

At a production-grade DevSecOps level, you must automate and secure this pipeline using Infrastructure as Code (IaC) and follow the principle of least privilege.

- Eliminate Direct IAM User Access via Role Assumption: Never map individual IAM Users directly into the cluster or use them for daily operations. Map IAM Roles to EKS instead, and enforce engineers to assume those roles via AWS SSO/Identity Center.

- Deprecate

aws-authwith IaC (Shift-Left Security): Actively migrate existing clusters away from theaws-authConfigMap. Never hardcode IAM ARNs in manual edits. Utilize Terraform (aws_eks_access_entryandaws_eks_access_policy_associationresources) to map IAM roles to EKS administrators seamlessly and prevent configuration drift. - Granular RBAC Over

system:masters(Least Privilege): Avoid theAmazonEKSClusterAdminPolicyand mapping roles to thesystem:mastersgroup unless absolutely necessary for break-glass administration, as it bypasses all RBAC checks. Instead, create granular K8sRolesand map users to restricted namespaces usingRoleBindings. - Mandate Modern Machine Identities: Mandate the Amazon EKS Pod Identity Agent for new workloads instead of older IRSA methods to reduce IAM trust policy complexity and OIDC management overhead.

- Unified Auditing & Compliance: Enable EKS Control Plane Logging (specifically the

authenticatorandauditlog streams) and route them to Amazon CloudWatch. Correlate these logs with AWS CloudTrail to actively monitor modifications to EKS Access Entries and maintain a complete chain of custody. - Integrate Security Tooling:

- Checkov by Prisma Cloud: A static analysis tool to scan Terraform code and ensure you aren’t over-provisioning EKS Access Entries.

- Teleport / HashiCorp Boundary: For highly secure environments, consider bypassing direct IAM-to-EKS mapping entirely using an identity-aware proxy for short-lived, Just-In-Time (JIT) Kubernetes access.

- OIDC Federation: Modern clusters often integrate external Identity Providers (Okta, Azure AD, Google Workspace) directly into Kubernetes, mapping SSO groups directly to K8s RBAC.

- Tooling Integration:

- AWS IAM Authenticator: The core engine behind the mapping.

- Checkov by Prisma Cloud: A static analysis tool to scan Terraform code and ensure you aren’t over-provisioning EKS Access Entries.

- Teleport / HashiCorp Boundary: For highly secure environments, consider bypassing direct IAM-to-EKS mapping entirely using an identity-aware proxy for short-lived, Just-In-Time (JIT) Kubernetes access.

- OIDC Federation: Modern clusters often integrate external Identity Providers (Okta, Azure AD, Google Workspace) directly into Kubernetes using OpenID Connect, mapping SSO groups directly to K8s RBAC.

- Cross-Account Access: You can map IAM roles from different AWS accounts into a single EKS cluster, provided the cluster’s IAM role trusts the external account (heavily used in Hub-and-Spoke architectures).

Additional Details

Key Components

- AWS STS: Security Token Service, validates the identity.

- EKS API Server: The Kubernetes control plane entry point.

- Access Entries: AWS-native mapping for IAM to K8s.

- RBAC (Role / RoleBinding): K8s native authorization objects.

Key Characteristics

- Decoupled: AuthN (AWS) and AuthZ (K8s) are handled by separate systems.

- Short-lived: STS tokens expire typically in 15 minutes, ensuring high security.

- API-Driven: With modern Access Entries, identity mappings can be managed via AWS APIs without direct cluster access.

Use Case

- Granting a developer read-only access to a specific Kubernetes namespace to view application logs without giving them access to production secrets or other namespaces.

- Allowing a CI/CD pipeline (like GitHub Actions via OIDC) to assume an IAM role and deploy Helm charts into the cluster.

Benefits

- Centralized Identity: No need to create separate user accounts inside Kubernetes; you leverage your existing AWS IAM infrastructure.

- Enhanced Security: No static passwords or long-lived credentials stored in kubeconfig files.

- Compliance: Easy tracking of who did what using AWS CloudTrail and K8s Audit Logs.

Best Practices

- Never use IAM Users directly for daily operations; map IAM Roles to EKS, and have users assume those roles.

- Adopt EKS Access API and migrate away from

aws-authConfigMap. - Create granular K8s Roles bound to specific namespaces rather than using ClusterRoles.

- Regularly rotate the IAM keys of any service accounts used in external CI/CD systems (or better yet, use OIDC federation).

Technical Challenges

- Troubleshooting

Unauthorizederrors can be tricky because the failure could be at the AWS CLI token generation, the IAM mapping, or the RBAC permissions. - Managing state drift if some engineers manually edit the

aws-authConfigMap while Terraform expects a different state.

Limitations

- IAM policies cannot directly restrict access to specific Kubernetes resources (e.g., you cannot write an AWS IAM policy to restrict access to a K8s namespace; IAM only gets you into the cluster, RBAC must do the rest).

Common Issues

- “You must be logged in to the server (Unauthorized)”: Usually means your AWS token expired, your AWS CLI is using the wrong profile, or the IAM entity is not mapped in EKS.

- “Forbidden: User cannot list resource in API group”: Means authentication succeeded, but K8s RBAC is blocking you. You need a RoleBinding.

Problems and Solutions

- Problem: Accidentally deleting the

aws-authConfigMap and locking everyone out. - Solution: As long as you have access to the IAM Role that created the cluster, you can still log in and fix it. Better yet, switch to EKS Access Entries where AWS APIs prevent accidental lockout of cluster administrators.